One of my shoots for our program in Wichita, Kansas, for Teen Challenge, I’ve added a little bit of a different approach. To model after a new wave of audience and the tech tools recently offered, I am transitioning from the traditional A/B-roll stock approach into using AI storytelling.

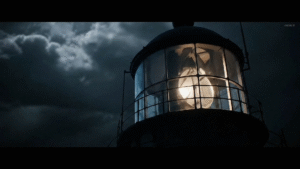

This is my first attempt at editing a storytelling experience with generated AI clips. Condensed into tens of usable video prompts that made it into the edit from over a hundred and fifty generated prompts—out of nearly four hundred tries and misses with Google Veo3—I’m quite impressed with the amount of detail I was able to use creatively on their platform. After doing my own comparison and test research with Sora and Veo, it seemed Veo won with its balance of quality and controls, hands down.

Even though Veo is in its beginner stages, it comes with a collection of basic character tools. However, it feels a bit amateur in its approach by giving users four separate tools to combine into a storytelling experience, when you could just deep dive using other tools and bring assets into Google Veo. For instance, they have Flow, which is your main AI video generator, and then Whisk, which is basically a user-friendly image generator that can combine multiple styles into one image and then animate it. Then there’s another tool that is basically a text-to-image system, and finally MusicFX, their “attempt” at user-generated music from text prompts—which is pretty rough right now. At the time of writing, it produces what we used to call “music” back in the 90s, especially the crumpled MIDI-like music from the Sound Blaster Live era. It feels a bit like generating karaoke music.

Google Veo still has work to do, especially with camera controls and movement. If you create the prompt just right, you might be able to get about 40% of what you’re aiming for, like “over-the-shoulder, using an f3.0 Sony lens – tight dramatic shot,” hoping to achieve an out-of-focus, slightly POV cinematic style—but it doesn’t always deliver.

As an editor, I was frustrated because in pre-production I plan and capture exactly what I need to tell the story in post. So not being able to clearly get what I want out of the AI shots meant lots of trial and error—eventually getting something usable after twenty prompts to stitch all the shots into a well-kept virtual masterpiece. It’s challenging.

Another problem is that AI tools have so many text censors. For instance, at the end, when the second character representing Jesus takes off the raincoat hood of the wet and beaten sailor, I spent about fifty prompts trying various phrasings and angles, being very detailed about the character’s movement. Half of the time it returned that it might violate terms of service, and the rest of the time it couldn’t make the hand motion, or it changed the character’s appearance. As an artist, I had to adapt from what I was used to in an analog approach to being limited to what the AI could give me.

Some points worth noting: Veo3 can add good quality sound effects to generated videos, although sometimes it feels a bit over-dramatic. Also, I’ve run into censorship issues: in another story outline on the Teen Challenge history in New York, it often mislabels uploaded photos as minors or unusable content when trying to animate David Wilkerson’s time as a pastor on the streets. Sometimes the subtleties of Google Veo and Gemini can “cheat” into bypassing these filters, and upscaling into 4K with Topaz Labs helps when working with old 35mm prints. The film grain and fuzziness blend the artifacts of AI generation into workable clips.

The positives: it’s quickly changing how we interact with the audience, opening up different visuals. As AI improves at producing realistic video, it will no longer just create hyper-realistic utopian cities and funny TikTok reels—but give non-fiction writers a new tool at a fraction of the cost of traditional production. It feels like every month there are new tools for creatives, pushing beyond the online generators that once boasted about making your own talking-head avatars like Syllaby, and soon it will be realistic enough for photorealistic storytelling in any genre.

My fear is that blended AI scenes and generated content—not only video but text—will become so generic as the new medium that people will lose the habit of thinking creatively and fall into artificial artforms, calling it their own style. Our own ability to think, reason, comprehend, and dream could be lost in “picture-perfect” imagery.

With every generation of tools, there’s also a chance to use the weaknesses of realism and present something unpolished for God’s Spirit to flow through. If we forget our God-given ability—where He created and said it was good—we come dangerously close to believing we need to achieve a certain standard before, as Christian artists, we can be used as we are.

![]()

![]()

![]()

![]()

![]()

![]()

![]()

![]()